The U.S. AI Stack, Explained Through a Car

A deeper look beneath the metaphor — chips, CEOs, news, and the layer reshaping everything in 2026.

A friend — let’s call her Mary — called me on Monday.

“Ana, I’m so confused. I’ve been using ChatGPT lately, every day, all day. Now everyone is raving about Claude again. Then GPT-5.5 dropped. But I read recently that Gemini was ‘the smartest one.’ Another person is telling me to look at Manus, then another one says Perplexity is better because it can use different models. I consider myself reasonably tech-savvy — I keep up — and I’m lost in the middle of all of it.”

I’ve been getting some version of this constantly lately. The names alone are enough to disorient anyone — even people who pride themselves on staying on top of things.

So I went back to basics with her. Mary is a car person. I built the metaphor around that.

Three Layers. That’s It.

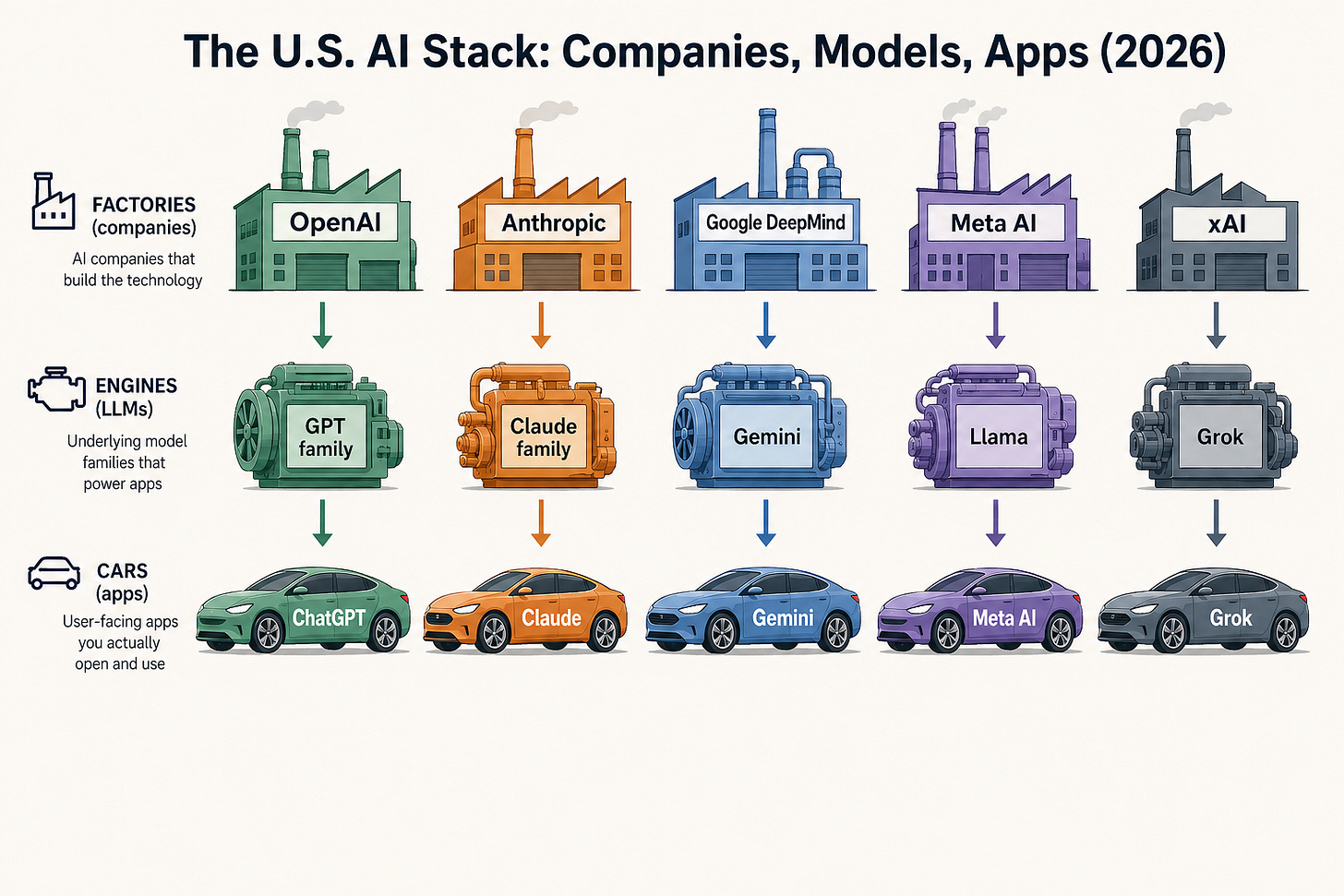

The Factory is the company that builds the AI — the major U.S. ones are OpenAI, Anthropic, Google DeepMind, Meta AI, xAI. Each shaped by a different worldview, different leadership, different values that flow into everything they make.

The Engine is the underlying model — the GPT family from OpenAI’s factory, the Claude family from Anthropic, Gemini from Google DeepMind, Llama from Meta, Grok from xAI. The raw power. One Factory usually makes several.

The Car is the app — what you actually open on your screen. ChatGPT, Claude, Gemini, the Grok app. The interface you drive.

Mary got it almost immediately. And the tip about Perplexity her friend had given her suddenly had a home in the picture: tools like Perplexity are cars that can swap their engine depending on the question or settings — same body, different power source under the hood.

That’s the simple picture as of, oh, a year ago.

What Changed in 2026: The Harness Layer

Here’s what’s been making the AI conversation feel especially noisy this year: the three-layer picture is no longer quite enough.

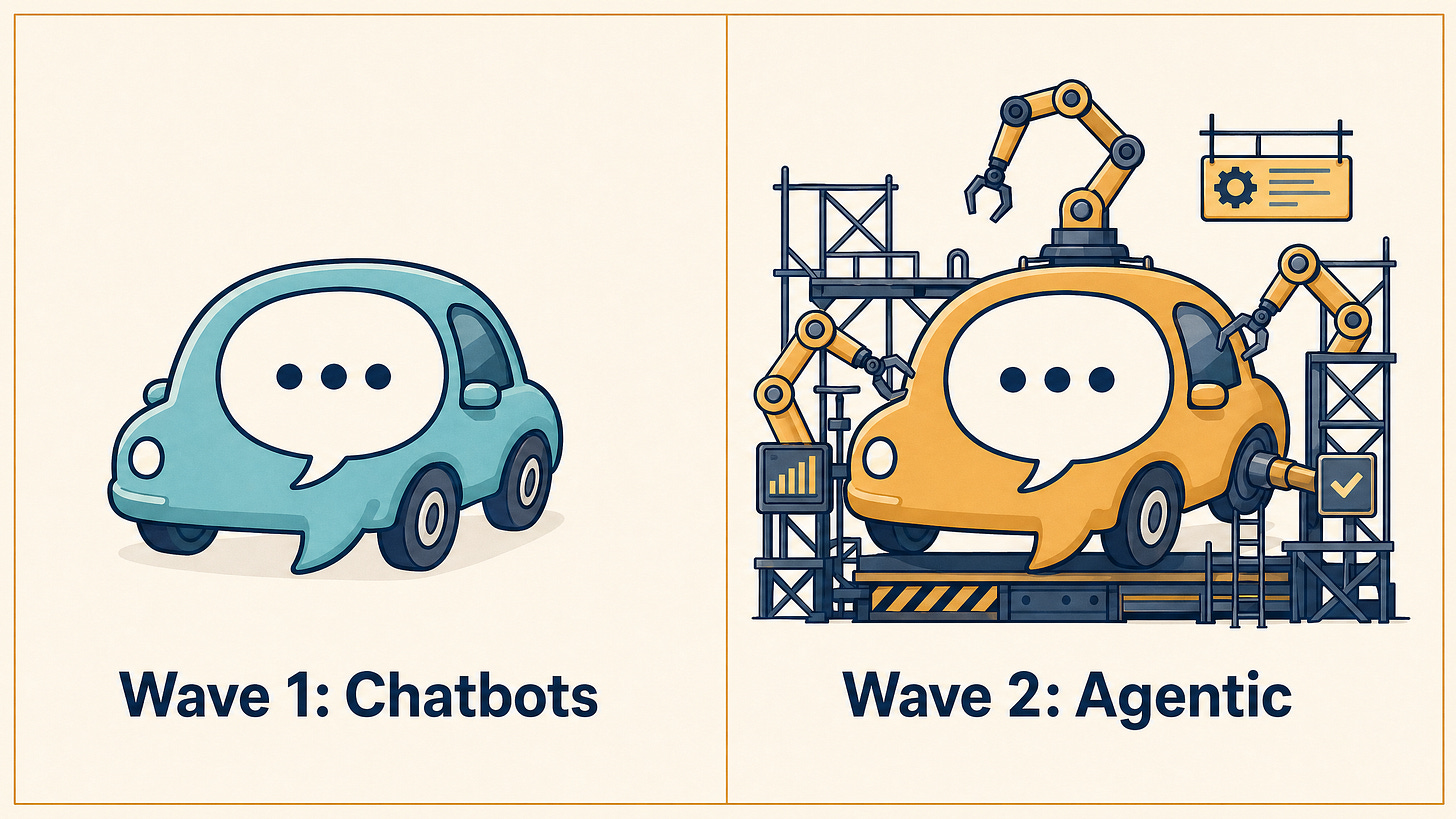

When the first wave of AI hit, the Cars were chatbots. We typed; they replied. Useful, but limited.

The current wave is different. The big conversation is about agentic capabilities — AI that can take actions, use tools, read your files, run code, chain multiple steps together. The Car becomes more like a robot that does things for us.

That capability doesn’t live only in the Engine. It emerges from the layer between the Engine and the Car.

Ethan Mollick — whose thinking I follow — calls this layer the Harness.¹ We can see it as the agentic scaffolding around the model. Which tools the model can reach. What actions it can take. Whether it can read your files, run code, chain multiple steps together.

Same Engine, different Harness, very different behavior.

This is exactly why Anthropic’s Claude Code (a coding tool) and Cowork (a workspace tool) feel so different from chatting with Claude on the website — even though all three sit on top of the same Claude Engine, made in the same Anthropic Factory. Same Factory, same Engine — wildly different Harnesses.

Now that you’ve seen the full four-layer picture, I want to acknowledge what even that picture hides.

What the Metaphor Hides

A useful metaphor is also an oversimplification, and there are at least three things underneath this one that are important to keep in mind.

The chips. Engines need raw material. The Engines you’ve been hearing about — GPT family (from GPT-3.5 to GPT-5.5 Pro), Claude family (from Haiku to Opus), Gemini (from Gemini 2 to Gemini 3 Deep Think) — are trained on enormous fleets of specialized chips, heavily dependent on Nvidia GPUs, alongside Google TPUs, Amazon Trainium/Inferentia, and other custom silicon. In 2026, the AI conversation is also a chip conversation — and the chips are why the Engines are so expensive to train, why energy use is exploding, and why the geography of where these chips can be manufactured matters geopolitically.

The data. Engines are trained on vast amounts of text, code, images, and more. Where that data comes from — public web, licensed sources, synthetic data, user data, copyrighted books — is itself a contested question working its way through courts. The Factories make different choices here, with different lawyers and different consequences.

The humans behind the Factories. This is the part most likely to land in your news feed. The CEOs of these companies are public figures — and the names you’ve been seeing for months have specific people, philosophies, and backgrounds behind them.

Quick guide:

OpenAI — Sam Altman, one of the most public-facing of the AI CEOs. Notable 2023 board episode that briefly removed and reinstated him. ChatGPT is OpenAI’s Car.

Anthropic — Dario Amodei (CEO) and his sister Daniela Amodei (President), both formerly at OpenAI. Anthropic was founded in 2021 with a stated focus on AI safety research. Claude is their Car.

Google DeepMind — Demis Hassabis, a former chess prodigy and neuroscientist, leads Google’s AI division (the merged DeepMind + Google Brain entity). Gemini is the Car.

Meta AI — Under Mark Zuckerberg’s broader strategy, Meta has taken an open-weights approach with the Llama family — releasing model weights publicly, which is a different posture from the others. Meta AI’s app is the Car; Llama is the Engine family.

xAI — Elon Musk’s AI company. Grok is the Car (and confusingly, also the name of the model family).

You don’t need to track all of this to use AI well. But knowing whose Factory you’re in helps explain why the Cars feel different — even when the Engine specs look similar on paper.

Once you can see all of this — the layers, what powers them, who’s behind them — the question stops being which AI to use. It becomes a leadership question.

The Leadership Imperative

Here’s what I think the real insight is, especially for leaders:

When we — and our teams — can’t see the layers, we make scattered choices. We lose precious time. We blame the technology. Multiply that across an organization, and what looks from the outside like “They are working with AI” starts to feel from the inside like “This is not helping.”

You can’t lead a team through AI adoption by only telling them which tools to use. They need the big picture, too. They need to understand how everything comes together, and what the real goals and vision are.

This isn’t about “What’s the best prompt?” It’s about asking different questions: Which Engines are best for the job at hand? Which Harnesses can we leverage? Which Car should we be driving to arrive where we want to go?

Sometimes the Factory matters too — its values, its safety posture, its approach to your data.

These are strategic choices. And right now, most organizations are making them without understanding the architecture — often just by delegating to IT.

Surfing the 100-foot AI wave isn’t really about being “good at AI.” For leaders, it’s about being awake to where the choices are actually happening — and helping the people you lead see them too.

When your team has said “AI didn’t work,” which of the four layers were they actually disappointed in?

References

¹ Mollick, E. (Feb 2026). A Guide to Which AI to Use in the Agentic Era. One Useful Thing. - https://www.oneusefulthing.org/p/a-guide-to-which-ai-to-use-in-the